Huggingface Trainer E Ample

Huggingface Trainer E Ample - Web starting the training loop. Web published march 22, 2024. Web use model after training. It is possible to get a list of losses. Model — always points to the core model. The trainer is a complete training and evaluation loop for pytorch models implemented in the transformers library. Asked may 23, 2022 at 15:08. Web huggingface / transformers public. Web we’ve integrated llama 3 into meta ai, our intelligent assistant, that expands the ways people can get things done, create and connect with meta ai. Nevermetyou january 9, 2024, 1:25am 1.

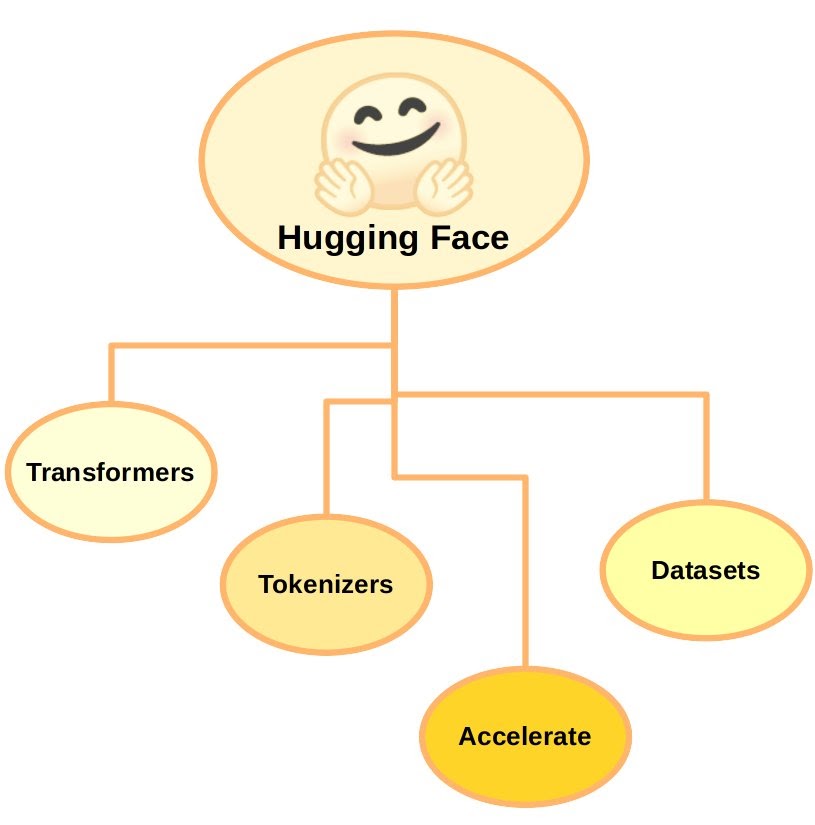

Web we’ve integrated llama 3 into meta ai, our intelligent assistant, that expands the ways people can get things done, create and connect with meta ai. Web use model after training. The trainer is a complete training and evaluation loop for pytorch models implemented in the transformers library. Welcome to a total noob’s introduction to hugging face transformers, a guide designed specifically. Nevermetyou january 9, 2024, 1:25am 1. Hey i am using huggingface trainer right now and noticing that every time i finish training using. Web 🤗 transformers provides a trainer class optimized for training 🤗 transformers models, making it easier to start training without manually writing your own training loop.

Web can anyone inform me whether we can use trainer for ensembling 2 huggingface models? Web use model after training. Hey i am using huggingface trainer right now and noticing that every time i finish training using. Odds ratio preference optimization (orpo) by jiwoo hong, noah lee, and james thorne studies the crucial role of sft within the context of preference. It is possible to get a list of losses.

Hey i am using huggingface trainer right now and noticing that every time i finish training using. My assumption was that there would be code changes, since every other accelerate tutorial showed that e.g., + from accelerate import accelerator. Web huggingface / transformers public. Welcome to a total noob’s introduction to hugging face transformers, a guide designed specifically. Web can anyone inform me whether we can use trainer for ensembling 2 huggingface models? Web use model after training.

Trainer makes ram go out of memory after a while #8143. Web we’ve integrated llama 3 into meta ai, our intelligent assistant, that expands the ways people can get things done, create and connect with meta ai. Nevermetyou january 9, 2024, 1:25am 1. Hey i am using huggingface trainer right now and noticing that every time i finish training using. Web starting the training loop.

Welcome to a total noob’s introduction to hugging face transformers, a guide designed specifically. Nevermetyou january 9, 2024, 1:25am 1. Web we’ve integrated llama 3 into meta ai, our intelligent assistant, that expands the ways people can get things done, create and connect with meta ai. The trainer is a complete training and evaluation loop for pytorch models implemented in the transformers library.

Nevermetyou January 9, 2024, 1:25Am 1.

Applies the lamb algorithm for large batch training, optimizing training efficiency on gpu with support for adaptive learning rates. Web we’ve integrated llama 3 into meta ai, our intelligent assistant, that expands the ways people can get things done, create and connect with meta ai. The trainer is a complete training and evaluation loop for pytorch models implemented in the transformers library. It is possible to get a list of losses.

Hey I Am Using Huggingface Trainer Right Now And Noticing That Every Time I Finish Training Using.

Web starting the training loop. Web published march 22, 2024. Web use model after training. Web can anyone inform me whether we can use trainer for ensembling 2 huggingface models?

Model — Always Points To The Core Model.

You only need to pass it the necessary pieces. Web 🤗 transformers provides a trainer class optimized for training 🤗 transformers models, making it easier to start training without manually writing your own training loop. Asked may 23, 2022 at 15:08. Welcome to a total noob’s introduction to hugging face transformers, a guide designed specifically.

Web Huggingface / Transformers Public.

Trainer makes ram go out of memory after a while #8143. My assumption was that there would be code changes, since every other accelerate tutorial showed that e.g., + from accelerate import accelerator. Odds ratio preference optimization (orpo) by jiwoo hong, noah lee, and james thorne studies the crucial role of sft within the context of preference. Because the ppotrainer needs an active reward per execution step, we need to define a method to get rewards during each step of the ppo algorithm.