E Pectation Ma Imization E Ample Step By Step

E Pectation Ma Imization E Ample Step By Step - Web expectation maximization step by step example. Web while im going through the derivation of e step in em algorithm for plsa, i came across the following derivation at this page. Pick an initial guess (m=0) for. Web em helps us to solve this problem by augmenting the process with exactly the missing information. One strategy could be to insert. The e step starts with a fixed θ (t),. Estimate the expected value for the hidden variable; Before formalizing each step, we will introduce the following notation,. Web the algorithm follows 2 steps iteratively: In this post, i will work through a cluster problem.

Web this effectively is the expectation and maximization steps in the em algorithm. Web below is a really nice visualization of em algorithm’s convergence from the computational statistics course by duke university. Since the em algorithm involves understanding of bayesian inference framework (prior, likelihood, and posterior), i would like to go through. One strategy could be to insert. Use parameter estimates to update latent variable values. Web the algorithm follows 2 steps iteratively: In the e step, the algorithm computes.

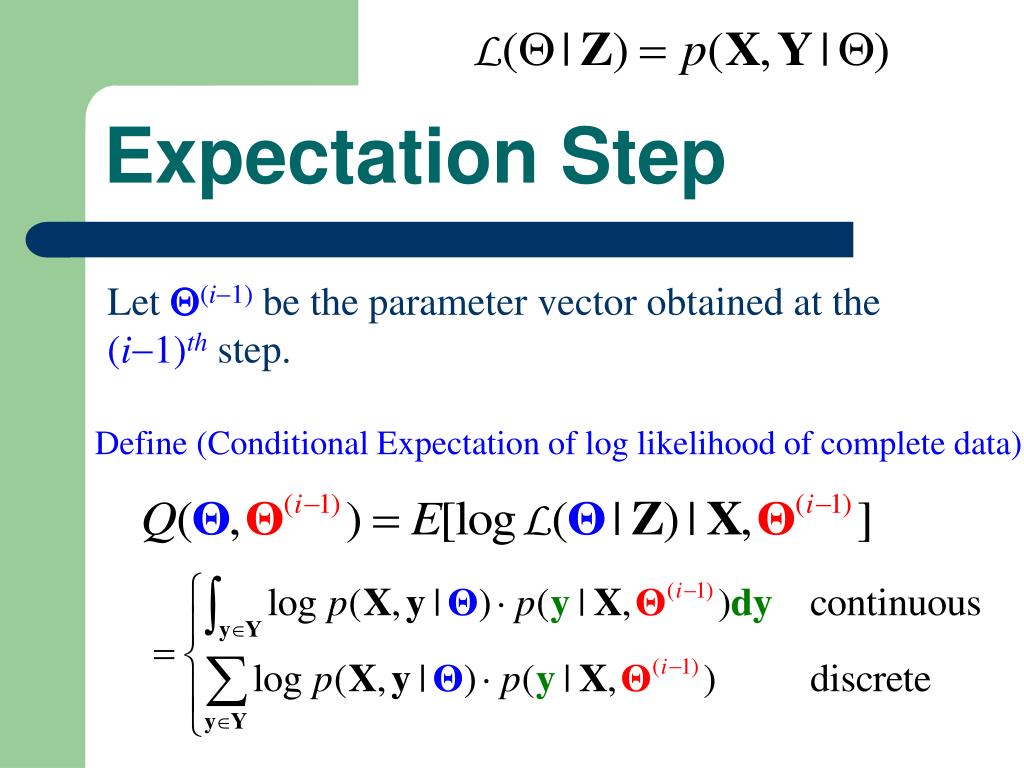

The e step starts with a fixed θ (t),. Based on the probabilities we assign. In the e step, the algorithm computes. Web steps 1 and 2 are collectively called the expectation step, while step 3 is called the maximization step. For each height measurement, we find the probabilities that it is generated by the male and the female distribution.

Web the algorithm follows 2 steps iteratively: Θ θ which is the new one. Web the em algorithm seeks to find the maximum likelihood estimate of the marginal likelihood by iteratively applying these two steps: First of all you have a function q(θ,θ(t)) q ( θ, θ ( t)) that depends on two different thetas: The e step starts with a fixed θ (t),. Could anyone explain me how the.

Web this effectively is the expectation and maximization steps in the em algorithm. Web the algorithm follows 2 steps iteratively: Web below is a really nice visualization of em algorithm’s convergence from the computational statistics course by duke university. The e step starts with a fixed θ (t),. One strategy could be to insert.

Web em helps us to solve this problem by augmenting the process with exactly the missing information. The e step starts with a fixed θ (t),. Web below is a really nice visualization of em algorithm’s convergence from the computational statistics course by duke university. In the e step, the algorithm computes.

The E Step Starts With A Fixed Θ (T),.

First of all you have a function q(θ,θ(t)) q ( θ, θ ( t)) that depends on two different thetas: Use parameter estimates to update latent variable values. Based on the probabilities we assign. Web expectation maximization step by step example.

Estimate The Expected Value For The Hidden Variable;

Before formalizing each step, we will introduce the following notation,. Web the algorithm follows 2 steps iteratively: Web while im going through the derivation of e step in em algorithm for plsa, i came across the following derivation at this page. Web this effectively is the expectation and maximization steps in the em algorithm.

Compute The Posterior Probability Over Z Given Our.

Web steps 1 and 2 are collectively called the expectation step, while step 3 is called the maximization step. Could anyone explain me how the. Note that i am aware that there are several notes online that. Θ θ which is the new one.

One Strategy Could Be To Insert.

Pick an initial guess (m=0) for. Web em helps us to solve this problem by augmenting the process with exactly the missing information. In the e step, the algorithm computes. In this post, i will work through a cluster problem.